Companies spent 33.9 billion dollars on generative AI per year. Yet 74% of teams struggle with implementation. The problem is not adoption. The problem is making AI testing tools actually work.

QA teams face specific challenges when testing generative AI systems. Traditional testing methods fail when applications generate unpredictable outputs. Test case creation becomes exponentially harder. Test maintenance costs spiral. Teams need new approaches.

Why does testing generative AI differ from testing software?

Testing deterministic software follows predictable patterns. Input X produces output Y every time. Automated testing validates these patterns reliably.

Generative AI breaks this model. The same prompt produces different outputs across runs. An AI model generates varied responses to identical inputs. Test scripts written for consistent results become useless.

| Traditional Software Testing | Generative AI Testing |

| Same input produces same output | Same input produces varied outputs |

| Pass/fail criteria are clear | Quality evaluation is subjective |

| Test once, run thousands of times | Tests need constant updates |

| Bugs are reproducible | Failures are probabilistic |

| Test data is static | Test data must evolve continuously |

| Coverage is measurable | Coverage is theoretical |

Non-deterministic outputs present the first major hurdle. The same question gets different answers. Results vary by model temperature. Context affects generation quality. Output format shifts unpredictably. These variations make traditional pass/fail testing impossible.

Complex evaluation criteria compound the problem. Correctness becomes subjective. Quality metrics lack standards. Human judgment is required often. Edge cases multiply infinitely. A customer service bot might give ten different correct answers to one question, each with a different tone and detail level.

Integration complexity rounds out the core challenges. AI systems connect to multiple services. API responses change dynamically. Performance varies by load. Failure modes hide easily in the layers of integration between components.

🤖 Read also: Test Case Generation Using LLMs

The test case generation problem

Manual testing efforts balloon when testing AI applications. Generative AI models need testing across unlimited input variations. Creating comprehensive test cases manually is impossible.

Teams using generative AI for test case creation face a paradox: they’re testing AI with AI-generated tests. This creates circular validation problems. How do you trust test cases created by the same type of system you’re trying to validate?

Coverage requirements explode in ways traditional software never experienced. AI handles infinite input combinations. Edge cases multiply exponentially. User intent varies broadly. Context dependencies are endless. A simple chatbot might need testing across thousands of conversation flows, each branching into multiple valid response patterns.

Automatic test case generation has limits that quickly become apparent. Generated tests miss real scenarios that humans encounter. AI-generated test cases need validation themselves. Test scripts require human review before deployment. Quality varies significantly between generated tests, with some being useful and others completely missing the point.

Test data generation adds its own complexity. Creating realistic synthetic test data is hard. Data privacy rules limit production data use. Generative adversarial networks help but add another layer of complexity to manage. Test datasets need constant updates as the AI model learns and changes behavior.

Gartner data shows 69% of respondents predict generative AI will impact automated software testing in three years. But implementation challenges (36%), skill gaps (34%), and high costs (34%) block teams now.

Test automation and maintenance costs

Test automation tools designed for deterministic systems struggle with generative AI. Tests break frequently. Maintenance consumes developer time that automation was supposed to free up.

| Cost Factor | Traditional Testing | Generative AI Testing |

| Initial test creation | Medium effort | High effort |

| Test maintenance | 10-15% of testing time | 40-60% of testing time |

| Test stability | Stable over months | Breaks with model updates |

| Validation complexity | Simple pass/fail | Requires interpretation |

| Infrastructure costs | Predictable | Variable and scaling |

| Skill requirements | Standard QA knowledge | AI expertise needed |

The cost calculation shifts dramatically between traditional and AI testing. Traditional software testing works by writing a test once and running it thousands of times. Maintenance cycles are predictable. Pass and fail criteria are clear. Test suites remain stable over long periods.

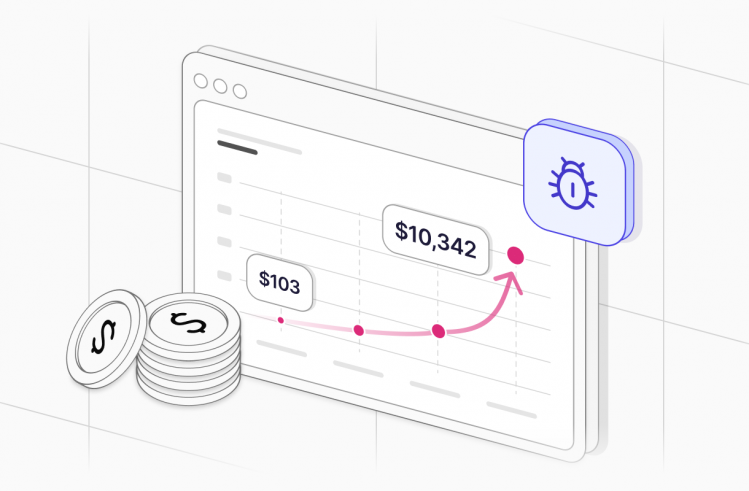

Generative AI testing requires constant updates to tests. Validation criteria shift as the model learns. Results require interpretation rather than simple pass/fail evaluation. Test maintenance becomes a continuous activity consuming 40% to 60% of testing time when working with AI systems. This matches the human time supposedly freed by test automation, creating zero net gain.

Evaluating quality in non-deterministic outputs

How do you test correctness when there’s no single right answer? This is the core generative AI testing challenge that stumps even experienced testers.

A customer service AI bot answers questions. One response is friendly and brief. Another is detailed and formal. Both are correct for the question asked. Writing automated tests that validate both responses while rejecting actually wrong answers requires methods traditional testing never needed.

- Human evaluation provides the gold standard but doesn’t scale. Manual review of outputs works for small pilots

- Similarity metrics offer a middle ground. Comparing generated output to reference answers provides some automation

- Constraint checking catches obvious failures without solving the quality problem. Verifying output meets basic requirements is possible, but passing these checks doesn’t mean the output is actually good

- Model-based evaluation introduces meta-problems. Using AI to evaluate AI outputs runs faster than human review, but raises the question: if you trust AI to evaluate AI, why not trust the original AI?

According to Bain research, accuracy concerns are easing as companies gain confidence. But 75% of companies still struggle finding in-house expertise for AI testing. The evaluation problem remains largely unsolved.

Data challenges specific to AI testing

Testing software applications requires test data. Testing generative AI systems requires orders of magnitude more data with higher complexity. 70% of high-performing AI teams report difficulties with data, according to McKinsey. Problems include data governance, integration speed, and insufficient training data.

- Test data generation needs dwarf traditional requirements. Volume requirements are massive compared to conventional testing. Diversity must match production usage patterns. Edge cases need representation in test datasets. Privacy constraints limit real data use for testing purposes.

- Synthetic test data solves some problems while creating others. Generated data lacks the messiness of real-world inputs. Patterns may not match what production systems encounter. AI-generated data carries AI-generated biases. Validating synthetic data creates circular logic problems.

- Production data has limitations that make it hard to use for testing. Privacy regulations restrict use of real customer data. Sensitive information needs masking before testers can access it. Data volume creates infrastructure costs for test environments. Subsetting to reduce size loses important patterns that only appear at scale.

- Data versioning becomes exponentially complex with AI systems. Training data changes affect test results in unpredictable ways. Model versions multiply as teams experiment. Test data must track with specific model versions. Baseline comparisons become difficult when both data and model change between test runs.

57% of organizations estimate their data is not AI-ready, per Gartner research. This directly impacts testing capabilities and explains why teams struggle even when they have the right tools and expertise.

Integration testing across AI systems

Generative AI applications rarely work alone. They integrate with databases, APIs, other AI models, and traditional software systems. Each integration point multiplies testing complexity in ways that compound quickly.

Multi-model dependencies create the first problem. One AI model calls another AI model. Outputs from the first feed into the second as inputs. Error propagation becomes hard to trace through multiple AI layers. Debugging becomes exponentially harder with each AI component in the chain.

External service connections add traditional integration problems on top of AI complexity. APIs change without notice from third-party providers. Rate limits affect test execution timing. Network variability creates test flakiness. Authentication adds complexity to test setup and maintenance.

Performance unpredictability makes capacity planning nearly impossible. Response times vary widely based on factors outside test control. Load affects generation quality in non-linear ways. Scaling behavior defies prediction. Costs spiral during testing as API calls multiply.

State management in AI agents creates testing nightmares. AI agents maintain context across interactions. Session state affects outputs in ways that are hard to reproduce. History dependencies complicate test isolation. Resetting state between tests often fails to actually clear AI memory.

Skills gaps and resource constraints

The advance of generative AI outpaces QA team training. Testers skilled in traditional testing lack AI-specific knowledge. AI engineers don’t know testing best practices. This skills gap shows up in every testing challenge.

Bain research shows companies average 160 employees working on generative AI, a 30% increase from the prior year. But 75% struggle finding needed expertise. The talent shortage affects testing more acutely than development because fewer people specialize in AI testing.

Teams often choose between hiring AI experts who don’t know testing or testers who don’t understand AI. Neither choice works well, leading to failed pilots and abandoned AI testing initiatives.

Tool limitations in current testing platforms

Existing test automation platforms weren’t built for generative AI. Most testing tools assume deterministic behavior. AI-powered test tools are emerging but remain immature and fragmented.

- Traditional testing tools fail at basic AI testing tasks. They were built for deterministic testing where the same input always produces the same output. They require exact output matching that doesn’t work with generative AI. They cannot handle output variation as anything other than test failure. Probabilistic validation is simply not supported in conventional frameworks.

- Generative AI testing tools are appearing but have major gaps. The market is immature and fragmented with no clear leaders. Standards don’t exist for what good AI testing looks like. Integration with existing development workflows is complex and unreliable. Costs are high and unpredictable as vendors experiment with pricing models.

- Test management needs have evolved beyond what current platforms support well. Tracking AI-specific test metrics requires custom solutions. Managing probabilistic results doesn’t fit existing reporting. Versioning across models and data has no established patterns. Organizing non-deterministic test suites challenges conventional test management thinking.

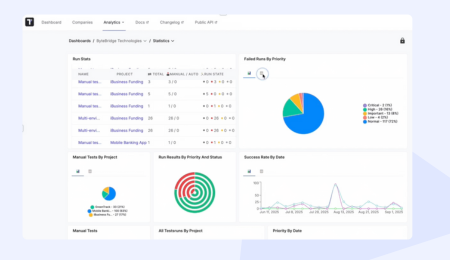

Platforms like Testomat.io help by centralizing test management across manual and automated testing. Organizing test cases, tracking execution, and managing teams working on both AI and traditional testing provides value even when AI-specific features are still maturing. But comprehensive test management still requires adaptation for AI testing challenges that existing features weren’t designed to handle.

Security and safety testing requirements

AI systems introduce new security vulnerabilities that traditional security testing misses completely. Prompt injection attacks fool models into revealing sensitive data. Adversarial inputs cause harmful outputs. Testing these risks requires methods that don’t exist in conventional security testing toolkits.

| Security Risk | Description | Testing Challenge |

| Prompt injection | Malicious prompts bypass safety controls | Attack vectors are infinite and unpredictable |

| Data poisoning | Corrupted training data affects model behavior | Subtle effects hard to detect in testing |

| Model extraction | Attackers steal AI capabilities through queries | Difficult to monitor and prevent |

| Output manipulation | Adversarial inputs generate harmful content | Edge cases multiply infinitely |

| Privacy leakage | Models reveal training data | Statistical tests are incomplete |

Unique attack surfaces multiply faster than defenses appear. Prompt injection vulnerabilities have no equivalent in traditional software. Training data poisoning risks affect model behavior in subtle ways. Model extraction threats allow competitors to steal AI capabilities. Output manipulation creates potential for fraud and abuse.

Testing complexity grows because attack vectors multiply infinitely. Adversarial examples are hard to predict or generate systematically. Defensive measures affect functionality in ways that require testing trade-offs. Security improvements often reduce performance or increase costs, forcing difficult decisions.

Compliance requirements are emerging faster than testing approaches to validate them. Regulatory frameworks are appearing in Europe, California, and other jurisdictions. Standards are not yet established for what constitutes adequate AI testing. Auditing AI decisions is complex without clear audit trails. Liability questions remain open, making it unclear who is responsible when AI systems fail.

Hallucination detection and mitigation

AI hallucinations happen when models generate plausible-sounding but factually incorrect information. Testing must detect these failures before production deployment. Research shows hallucinations (15%) are among top technical reasons AI pilots fail, along with data privacy (21%) and ROI disappointment (18%). Solving hallucination detection remains an active research area without clear production solutions.

- Fact-checking provides one detection approach but has severe limits. Verifying against known truth sources works only for facts in those sources. Checking internal consistency catches some hallucinations. Validating referenced data helps but doesn’t catch subtle errors. The process is time-consuming and incomplete at best.

- Confidence scoring offers partial solutions. Models can output confidence levels alongside answers. Low confidence flags outputs for human review. But high confidence doesn’t guarantee accuracy. Models confidently hallucinate regularly. Gaming the scoring system is possible for adversarial inputs.

- Human validation remains the gold standard with impossible economics. Manual review of outputs provides the most reliable detection. Subject matter expert verification catches domain-specific hallucinations. But this approach is expensive and slow. It doesn’t scale to production volumes where millions of outputs need validation.

- Automated validation has hard limits that teams keep hitting. Systems cannot verify all facts automatically against reliable sources. Novel claims lack validation sources by definition. Context affects what counts as correct. Edge cases hide easily in the vast space of possible outputs.

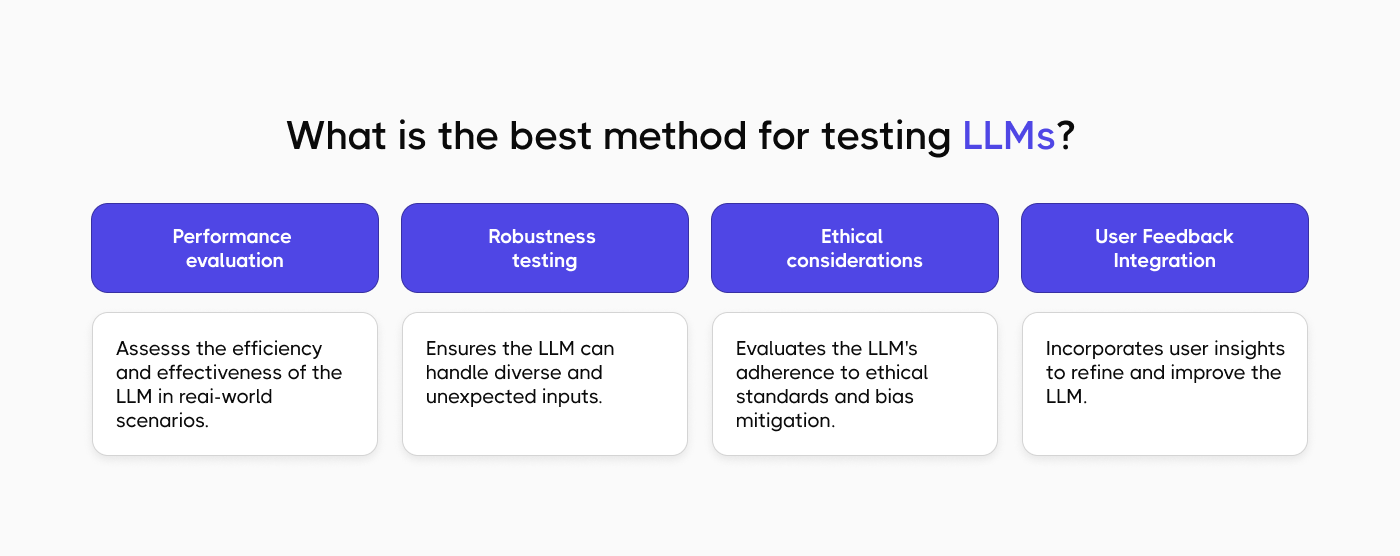

Best practices for testing generative AI applications

Despite challenges, teams are finding approaches that work. Success comes from adapting traditional testing while accepting AI limitations rather than fighting them.

- Start Small. Don’t try to test everything at once. Pick one clear use case and do it well. Learn by doing. Small wins help you understand what works and what doesn’t. Once you see results, it’s easier to grow and invest more. Mistakes will happen, use them to improve.

- Mix Different Ways of Testing. Automation is great for repetitive checks. People are better at judging quality and meaning. The best results come from using both together. Keep testing, learn from what you see, and adjust as you go.

- Grow Skills Step by Step. Help your current testers learn the basics of AI. Bring in experts when things get more complex. Mix different skills in one team so people can learn from each other. Sharing knowledge helps everyone move faster.

- Use Tools That Actually Help. Don’t overcomplicate things. Use tools to stay organized as things grow. Try AI-specific tools only if they solve a real problem. Regular automation tools still work for simple, predictable parts.

Teams applying these practices report faster progress and fewer failed pilots than those attempting comprehensive AI testing immediately.

How test management helps address AI challenges

Comprehensive test management becomes more important when testing complexity increases. AI testing tools that handle both automated and manual testing help teams navigate AI testing challenges that overwhelm ad-hoc approaches.

- Organization provides value through centralization. Test cases across AI and traditional systems live in one place. Coverage tracking works across model versions and updates. Test data and environments get managed systematically. Documentation of validation approaches prevents knowledge loss as teams change.

- Collaboration improves when everyone works in shared systems. Developers, testers, and AI specialists see the same information. Results sharing across teams prevents duplicate work. Status communication happens through the platform rather than meetings. Coordinating efforts becomes efficient rather than requiring constant synchronization.

- Visibility prevents surprises and enables faster response. Teams track what’s tested and what needs attention. Monitoring test execution trends reveals patterns. Bottlenecks become visible quickly. Progress reporting to stakeholders happens through dashboards rather than status meetings.

- Adaptation keeps testing aligned with changing needs. Adjusting testing strategies based on results happens within the platform. Incorporating new approaches is easy when the platform is flexible. Scaling efforts as needed doesn’t require rebuilding infrastructure. Continuous improvement happens through iteration rather than periodic overhauls.

Testomat.io provides unified test management for teams testing generative AI applications alongside traditional software. Centralized management helps teams handle increased complexity without losing track of testing status or drowning in coordination overhead.

Testing across the AI lifecycle

Testing generative AI is not a one-time activity before deployment. AI models update, retrain, and evolve continuously. Testing must happen throughout the AI system lifecycle with different focus at each stage.

| Phase | Testing Focus | Key Activities | Success Criteria |

| Development | Model foundations | Validate training data quality, test initial outputs, establish baselines | Clean data, expected behavior patterns |

| Deployment | Production readiness | Integration testing, performance validation, security assessment | All systems connected, meets load requirements |

| Production | Continuous monitoring | Output quality tracking, error detection, user feedback | Quality maintained, errors caught quickly |

| Model updates | Change validation | Regression testing, comparative analysis, impact assessment | No degradation, improvements validated |

Development phase testing validates foundations before deployment. Model training needs validation to ensure data quality and learning effectiveness. Initial outputs get tested against expected behavior patterns. Baselines get established for comparison as the model evolves.

Deployment phase testing verifies production readiness. Integration testing validates connections to other systems. Performance validation ensures the model handles expected load. Security assessment identifies vulnerabilities before exposure. User acceptance testing confirms the AI meets actual user needs.

Production monitoring becomes continuous testing at scale. Output quality tracking catches degradation over time. Performance monitoring detects scaling issues. Error detection triggers investigation of failures. User feedback collection provides real-world validation that lab testing misses.

Model updates require comprehensive regression testing. Testing after changes validates that improvements didn’t break existing capabilities. Comparative validation measures new versions against old ones. Impact assessment quantifies changes in behavior. Rollback readiness ensures teams can revert if updates cause problems.

Each phase requires different testing strategies and tools. Supporting this continuous cycle demands test management that spans the entire lifecycle rather than treating testing as a pre-deployment gate.

Conclusion

Testing generative AI applications challenges QA teams in ways traditional software testing never did. Non-deterministic outputs, exponential test case requirements, skill gaps, and immature tooling create real obstacles that teams face daily.

Success requires accepting that AI testing differs fundamentally from traditional software testing. Perfect testing is impossible when outputs are non-deterministic. Good enough testing is achievable with realistic expectations and appropriate resources.

Test management platforms help by providing structure when complexity increases. Organizing test cases, tracking execution, and coordinating team efforts matters more when testing challenges multiply beyond what individuals can hold in their heads.

Testing generative AI will remain challenging for the foreseeable future. But the challenges are solvable with realistic expectations, appropriate resources, and willingness to evolve testing practices as AI capabilities advance. The teams that figure this out gain competitive advantages while others struggle with buggy AI deployments.

Frequently asked questions

How does generative AI in testing help QA teams improve test coverage and testing efficiency?

Generative AI for software testing helps teams automatically generate test cases covering scenarios manual testing efforts would miss. AI can generate synthetic test data using generative adversarial networks, creating diverse test datasets quickly. The integration of generative AI into QA enables test automation tools to create test cases across edge cases and input variations. Teams using generative ai tools report improved test coverage but face challenges validating ai-generated test quality.

Can generative AI tools automatically create test cases that replace manual testing efforts entirely?

No. While ai can automate repetitive test creation tasks and generative ai can generate initial test suites, human judgment remains necessary. Exploratory testing still requires testers who understand software system behavior. Using generative ai tools to automatically generate test cases works for basic scenarios but misses nuanced quality issues. The future of generative ai in testing involves hybrid approaches combining ai-based testing with human expertise rather than full automation.

How do test automation platforms integrate generative AI to enhance test data generation and test suite creation?

Test automation platforms are incorporating generative ai to create synthetic test data addressing privacy constraints on production data. AI capabilities enable automatic test case generation from requirements or existing code. Generative ai-based testing tools use ai to generate test scenarios covering diverse inputs. The power of generative ai helps testing across multiple configurations and environments. Teams implementing generative ai see benefits in test data generation speed but struggle with test quality validation.

What testing environment and testing capabilities do teams need when using generative AI for software testing by automating test creation?

Teams need robust testing environments supporting both traditional testing and ai-driven test execution. Testing strategies must accommodate non-deterministic ai agent outputs. QA teams require new testing capabilities including prompt engineering skills and statistical validation methods. The use of generative ai demands test management tracking ai-generated test performance. As ai continues to evolve and generative ai continues advancing, testing software requires continuous adaptation of testing efforts and comprehensive test approaches.